As the popularity of artificial intelligence (AI) continues to soar, more individuals are exploring the creation of simple chatbot applications to streamline daily tasks. However, what happens when these applications experience rapid growth and need to scale efficiently? This is where an observability tool proves invaluable, as it allows you to see how your application is performing and helps you maintain optimal functionality and minimize downtime. In this blog post, you’ll create a simple generative AI application, learn about potential problems that AI can introduce, and discover how to use New Relic to mitigate these issues before and when they arise.

Understanding AI technologies

What is AI in reality beyond the ideas that media and current hype have given us? AI is the simulation of human intelligence in machines programmed to think and learn like humans.

The core characteristics of AI include the ability to learn, reason, problem-solve, and perceive the world, including recognizing objects, speech, and text. If you’ve asked Alexa to tell you a knock-knock joke, you’ve already used AI. Other popular AI tools today include Siri, Google Assistant, and Cortana. These virtual assistants are examples of narrow AI—also known as weak AI—as they’re designed to perform specific tasks, but cannot understand or reason beyond those tasks.

If you’ve asked ChatGPT for help refining an email, then you’ve used what’s known as generative AI, a subset of narrow AI that focuses on creating content within defined constraints based on its training. This is the kind of AI we’ll be discussing in this blog post.

Generative AI mechanics

Under the hood, AI applications have several technical components that work in conjunction for these apps to exist. These layers consist of the following:

- Large language model (LLM): A deep learning model that comes with a vast amount of pre-trained data that can recognize and generate text, and more. Some popular LLMs are OpenAI’s ChatGPT, Amazon Bedrock, and Hugging Face, just to name a few. The LLM used in the application discussed in this blog is OpenAI’s gpt-3.5-turbo model.

- Orchestration Framework: A logistical tool that helps the LLM interact with an AI application’s storage and registry, also known as the vector database or vector data sources. A popular framework that’s used in the development of the chatbot discussed in this blog is LangChain.

- Vector databases: A storage or registry used to store a vast amount of data that has been split into chunks that can be queried based on similarity searches. Some popular vector data sources include Pinecone, Weaviate, and Facebook AI Similarity Search (FAISS). The vector database used for the chatbot in this blog is FAISS.

- Retrieval-augmented generation (RAG): The process that enhances the efficacy of LLM apps by:

- Fetching relevant documents or snippets from a vector data source when provided a user prompt/question.

- Generating a detailed and informed response that incorporates knowledge from the retrieved documents.

- Returning the response to the user.

It’s worth noting that RAG can be used in chatbots to make AI applications provide more informed human-like, contextually relevant responses.

Infrastructure: The component required for users to access AI applications, whether it be via physical GPUs and CPUs, or via the cloud such as Amazon Web Services (AWS), Azure, and Google Cloud Platform (GCP). The infrastructure used for the chatbot discussed in this blog is the local host.

Creating the generative AI application

The first thing we’re going to do is create our generative AI application, which will serve as a virtual health coach. We’re going to use an OpenAI GPT model and the LangChain framework to develop a health app that can take health-related questions as input, and provide guidance and support to help users adopt healthy habits.

The development process

Under the hood, this AI assistant will:

- Use a YouTube video that will serve as the “brain” (or primary source provided) of the health coach.

- Process the transcript from the video.

- Store details from the transcript into a FAISS library to create a searchable vector database.

This diagram provides insight into the technical architectural flow of the virtual health coach chatbot.

The process described above is captured in the create_vector_db_from_youtube_url(video_url:str) function as shown here:

def create_vector_db_from_youtube_url(video_url:str) -> FAISS:

loader = YoutubeLoader.from_youtube_url(video_url)

transcript = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=100)

docs = text_splitter.split_documents(transcript)

db = FAISS.from_documents(docs, embeddings)

return dbThe next step in creating the “brain” for the AI assistant is to:

- Pass in the FAISS database that’ll be used, the user’s questions, and the number of documents to retrieve from the YouTube transcript to stay within the token context window.

def get_response_from_query(db, query, k=4):- Perform a similarity search in the FAISS database based on the user’s question.

docs = db.similarity_search(query, k=k)

docs_page_content = " ".join([d.page_content for d in docs])- Initialize the OpenAI gpt-3.5-turbo LLM version.

llm = OpenAI(model="gpt-3.5-turbo") - Set up a prompt template that instructs the model to act as a health coach to answer questions based on the video transcript that’s provided as the primary source of information.

prompt = PromptTemplate(

input_variables=["question", "docs"],

template="""

You are a helpful Virtual Health Coach that can answer questions about the plant based lifestyle

based on the video's transcript.input_types=

Answer the following question: {question}

By searching the following video transcript: {docs}

Only use the factual information from the transcript to answer the question.input_types=

If you feel like you don't have enough information to answer the question, say "I don't know"

Your answers should be detailed.

""",

)- Create a chain of operations for the language model based on the specified LLM and prompt template.

chain = LLMChain(llm=llm, prompt=prompt)- Return a formatted response and the documents used for generating the response.

response = chain.run(question=query, docs=docs_page_content)

response = response.replace("\n", "") #formatting

return response, docs The process described above is an example of how RAG works since the function:

- Takes the user’s input (or prompt) to fetch relevant documents from the vector data source.

- Processes the prompt and the retrieved data to generate an informed response from the documents.

- Returns a formatted response to the user.

The final code for this process is demonstrated in the get_response_from_query(...) function as shown here:

def get_response_from_query(db, query, k=4):

docs = db.similarity_search(query, k=k)

docs_page_content = " ".join([d.page_content for d in docs])

#Work with the LLM -

llm = OpenAI(model="gpt-3.5-turbo")

#Work with the Prompt

prompt = PromptTemplate(

input_variables=["question", "docs"],

template="""

You are a helpful Virtual Health Coach that can answer questions about the plant based lifestyle

based on the video's transcript.input_types=

Answer the following question: {question}

By searching the following video transcript: {docs}

Only use the factual information from the transcript to answer the question.input_types=

If you feel like you don't have enough information to answer the question, say "I don't know"

Your answers should be detailed.

""",

)

#Work with the Chain component

chain = LLMChain(llm=llm, prompt=prompt)

response = chain.run(question=query, docs=docs_page_content)

response = response.replace("\n", "") #formatting

return response, docs User interface and experience

Now that the AI’s primary programming has been established, the Streamlit library is used to spin up an interactive web application for a user to engage with the virtual health coach. You can find the code snippet that handles the user interface component of this AI app here:

import streamlit as st

import packaging.version

import virtual_health_coach as eHealthCoach

import textwrap

st.title(" :violet[Virtual Health Coach]")

with st.sidebar:

with st.form(key="my_form"):

query = st.sidebar.text_area(

label="What is your health question?",

max_chars=50,

key="query"

)

submit_button = st.form_submit_button(label='Submit')

if query:

db = eHealthCoach.create_vector_db_from_youtube_url("https://www.youtube.com/watch?v=a3PjNwXd09M")

response, docs = eHealthCoach.get_response_from_query(db, query)

st.subheader("Answer:")

st.text(textwrap.fill(response, width = 85))For more information about the imported libraries that enable all of this code, or to spin up this application, refer to the ReadMe file.

AI demo app: Virtual health coach

So far, we have a generative AI technology that’s been designed to be a virtual health coach by answering health-related questions. For example, if you asked the chatbot to give you information about the plant-based diet, it’d respond based on the YouTube source provided in the code.

If you pose a question like "How do I ride a bike?" the chatbot will respond in a few different ways:

It will provide an elaborate response based on its understanding, or sources outside of the one provided in the code.

It will provide a response that doesn’t go into much detail since the YouTube source provided doesn’t directly give insight into that question

It will provide a response that may appear to have some truth to it; however, a closer look will reveal that it is quite senseless.

A nonsensical response like the one seen in the last screenshot is commonly referred to as an AI hallucination. This hallucination may seem harmless and even comical in some circumstances, but at scale, it can be problematic. The misinformation can lead to the user making misguided decisions which can potentially lead to legal issues, amongst other things.

Another point to note is that although the chatbot appears to have referenced the YouTube transcript provided in the code, it responded in three different ways, which exhibits the technology’s nondeterministic nature. A business can be deemed unreliable when its responses are only accurate some of the time or include illogical statements. On the backend, the erroneous outputs can lead to an overconsumption of resources, including additional telemetry data collection such as tokens and embeddings, which can increase overall operational costs.

Although New Relic can't directly detect hallucinations in your AI application, incorporating it into your tech stack helps mitigate issues and gain insights into the user experience. Not only will you be able to monitor the performance of the application, but also see how the user is interacting with the AI component in terms of the input provided, and the type of output that’s being generated in response. Gaining insight into this metadata can enable developers to optimize the engineering prompt templates and other variables embedded in the code, to prevent future AI-generated errors.

This diagram provides insight into the technical architectural flow of the virtual health coach chatbot with New Relic as the observability tool.

New Relic AI monitoring capability

As mentioned earlier, generative AI technologies are made up of several advanced layers that consist of the LLM, the orchestration framework, data sources, infrastructure, and the application itself. These layers facilitate user interaction with the application but introduce complexities that affect costs, performance, and user engagement, as measured by throughput (user traffic) and other telemetry data. Understanding these barometers is critical to businesses, so AI monitoring was introduced. Observability tools like New Relic not only allow users to quickly gain holistic insight into all of these telemetry data points, but its APM 360 capability automatically detects features specific to AI applications directly through the supported APM agents. Since the virtual health coach is written in Python, we’re going to instrument the application with the New Relic Python agent to observe the various backend processes that are making the application operable.

To test out the AI monitoring capability in New Relic:

Sign in to the New Relic platform at one.newrelic.com.

Select Add Data.

Type in Python and select the option under the Application monitoring view.

Follow the Python-guided installation manual to instrument the application with New Relic and launch the virtual coach.

Connect your logs and infrastructure.

Test the connection.

You should now be able to see the application listed in the All Entities view of the platform.

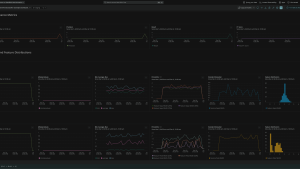

Once you click on the application, not only will you see overall insights into the number of requests your application is receiving, but you’ll also see a new section called AI Responses.

Within the AI responses view, you’ll be able to gain insight into:

- The number of requests the chatbot receives during a given time.

- The average response time it takes for the chatbot to respond to the user.

- The average token usage per response.

- A timeline of where the most throughput was received.

There’s also a new Responses table that breaks down the user-to-chatbot interaction even further by showing the exact user request, along with the responses that the chatbot provided. These granular details will increase the developer’s perception of how to optimize the prompt template in the code, or even prompt them to provide additional data to be stored, fetched, and retrieved from the vector database. Adding more tailored resources can, in theory, fine-tune the knowledge base of the AI, which will guide the chatbot to respond to the user in a more meaningful way.

To gain even more insight into the user’s request, click into a particular request column to discover what’s happening under the hood from the time the chatbot processes the user request, up until the time it responds. You’ll find the exact GPT model (or LLM) that’s being used for the user request and the GPT model that’s being used in the response. There is also visibility into the total amount of tokens that each user-to-chatbot interaction requires, and how close to the maximum token limit is reached for the duration of the chat completion.

These details are key for an AI developer to assess and further optimize the performance of the application to keep the costs as low as possible, while also understanding the overall user experience to make it more seamless.

Conclusion

Throughout this blog you’ve learned how to:

- Build a simple chatbot application;

- Understand the ethical concerns that can arise as a result of its creation; and

- Gauge the user engagement and other important metrics within the app through the New Relic AI monitoring feature.

You saw how New Relic’s AI capability can give AI developers comprehensive insight into AI apps such as chatbots, from the specifics of the user requests, the responses returned, the average response time it took for the chat completion, the tokens used throughout the process, the LLM used, and much more. With New Relic’s AI monitoring, you can safely navigate most complexities introduced by AI applications so you can focus on developing a seamless user experience while managing the business’s priorities (such as costs) accordingly.

Next steps

To get started with using New Relic AI monitoring, all you have to do is:

- Sign up for or log in to your New Relic account today, and

- Request early access to the AI Monitoring limited preview feature.

The views expressed on this blog are those of the author and do not necessarily reflect the views of New Relic. Any solutions offered by the author are environment-specific and not part of the commercial solutions or support offered by New Relic. Please join us exclusively at the Explorers Hub (discuss.newrelic.com) for questions and support related to this blog post. This blog may contain links to content on third-party sites. By providing such links, New Relic does not adopt, guarantee, approve or endorse the information, views or products available on such sites.